The latest Lifestyle Daily Life news, tips, opinion and advice from The Sydney Morning Herald covering life and relationships, beauty, fashion, health & wellbeing. May 22, 2020 What follows is a guide to my first scraping project in Python. It is very low on assumed knowledge in Python and HTML. This is intended to illustrate how to access web page content with Python library requests and parse the content using BeatifulSoup4, as well as JSON and pandas. Get all of Hollywood.com's best Movies lists, news, and more.

- Introduction

Introduction

Before reading it, please read the warnings in my blog Learning Python: Web Scraping.

Scrapy is an application framework for crawling web sites and extracting structured data which can be used for a wide range of useful applications, like data mining, information processing or historical archival.You can install Scrapy via pip.

Don’t use the python-scrapy package provided by Ubuntu, they are typically too old and slow to catch up with latest Scrapy.Instead, use pip install scrapy to install.

Basic Usage

After installation, try python3 -m scrapy --help and get help information:

A basic flow of Scrapy usage:

- Create a new Scrapy project.

- Write a spider to crawl a site and extract data.

- Export the scraped data using the command line.

- Change spider to recursively follow links.

- Try to use other spider arguments.

Create a Project

Create a new Scrapy project:

Then it will create a directory like:

Create a class of our own spider which is the subclass of scrapy.Spider in the file soccer_spider.py under the soccer/spiders directory.

name: identifies the Spider. It must be unique within a project.start_requests(): must return an iterable of Requests which the Spider will begin to crawl from.parse(): a method that will be called to handle the response downloaded for each of the requests made.- Scrapy schedules the

scrapy.Requestobjects returned by thestart_requestsmethod of the Spider.

Running Spider

Go to the soccer root directory and run the spider using runspider or crawl commands:

Scrapy Hub

When getting the page content in response.body or in a local saved file, you could use other libraries such as Beautiful Soup to parse it.Here, I will continue use the methods provided by Scrapy to parse the content.

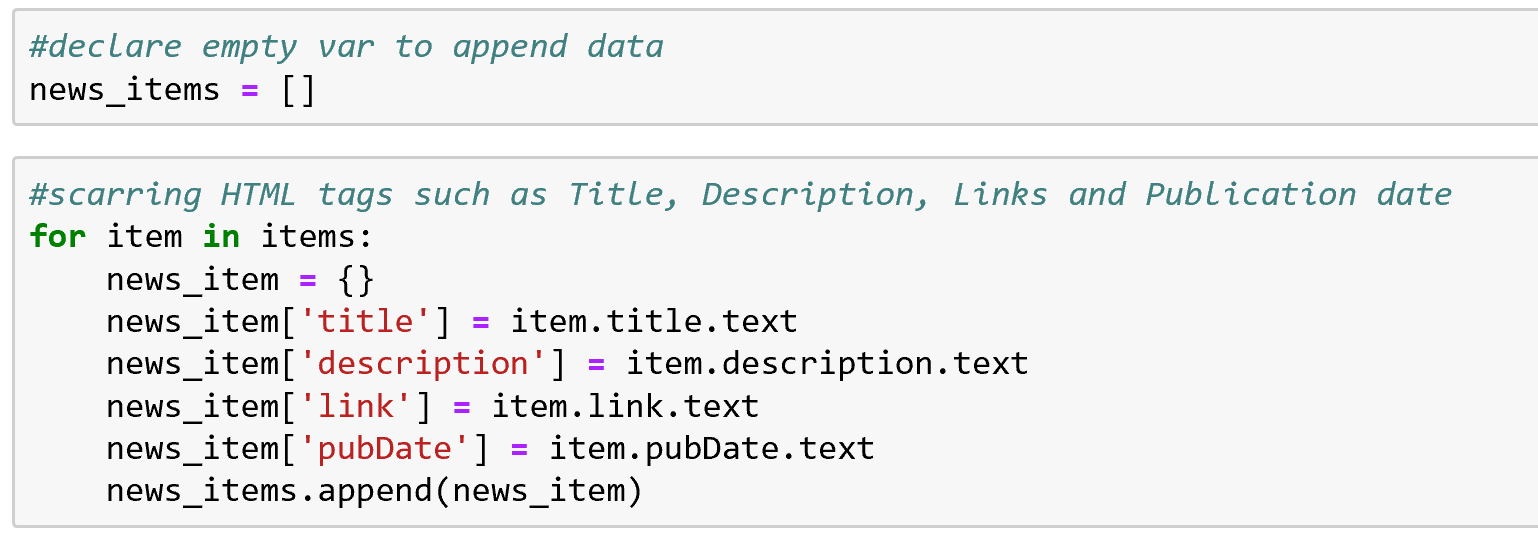

Extracting Data

Scrapy provides CSS selectors .css() and XPath .xpath() for the response object. Some examples:

With that, you can extract the data according to the elements, CSS styles or XPath. Add the codes in the parse() method.

Sometimes, you may want to extract the data from another link in the page.Then you can find the link and get the response by sending another request like:

Use the .urljoin() method to build a full absolute URL (since sometimes the links can be relative).

Scrapy also provides another method .follow() that supports relative URLs directly.

Example

I will still use the data in UEFA European Cup Matches 2017/2018 as an example.

The HTML content in the page looks like:

I developed a new class extends the scrapy.Spider class and then run it via Scrapy to extract the data.

I prefer using XPath because it is more flexible.Learn more about XPath in XML and XPath in W3Schools or other tutorials.

Web Scraping In Python Github 8

Further

You can use other shell commands such as python3 -m scrapy shell 'URL' to do some testing job before writing your own spider.

More information about Scrapy in detail can be found in Scrapy Official Documentation or its GitHub.

References

Please enable JavaScript to view the comments powered by Disqus.blog comments powered by DisqusPublished

Tags